I trust or don’t trust people. I trust AI to do its best as a tool as long as the designers can be trusted. People aren’t perfect nor will AI be perfect. Don’t know if the day is here yet, but different AI resources should be simultaneously utilized to cross-check and verify, along with I of course.

@humanssuck said:

I trust AI as much as I trust the average person.

Based on your forum name, I'm guessing it isn't very much.

Worry is the interest you pay on a debt you may not owe.

"Paper money eventually returns to its intrinsic value---zero."----Voltaire

"Everything you say should be true, but not everything true should be said."----Voltaire

@cladking said:

This is just prompt error. Sync with the thing and pick its brain. It's a lot "smarter" than anyone I've ever known.

The day after Catherine O'Hara died, AI (two different flavors) insisted that it was just a rumor and she was still alive. There's no "prompt error" there, you're either alive or dead. And AI was wrong.

Did the AI find anything that said it was a rumor? Some article, some social media post, a tweet? Or did it create the misinformation out of thin air? I don't have the answer to that question, but the question itself is pretty important.

@cladking said:

This is just prompt error. Sync with the thing and pick its brain. It's a lot "smarter" than anyone I've ever known.

The day after Catherine O'Hara died, AI (two different flavors) insisted that it was just a rumor and she was still alive. There's no "prompt error" there, you're either alive or dead. And AI was wrong.

Did the AI find anything that said it was a rumor? Some article, some social media post, a tweet? Or did it create the misinformation out of thin air? I don't have the answer to that question, but the question itself is pretty important.

She had just died. Probably a lot of sites had not caught up to it. He's been obsessing over this example since it happened. By the time Wikipedia was updated, the AI was probably flawless.

There were probably 1000 sources, human, text, and internet that also would have labeled her alive. People hold AI to a different standard. It's new and people are afraid of it.

My students just turned in first drafts of their these. Only one of them used an LLM for help, despite my encouragement to use them. As a result, they were universally bad with easily correctable flaws which the LLM found when I ran them through GPT5.2

All comments reflect the opinion of the author, even when irrefutably accurate.

My initial reponse to the OP's question was...trust AI with what, exactly?

...So I asked AI (Gemini)

-

PROMPT: Can I trust AI?

Trusting AI is a bit like trusting a highly intelligent, incredibly fast intern who sometimes sleep-walks: you can rely on them for the heavy lifting, but you probably shouldn't let them sign your mortgage papers without a double-check.

As of early 2026, the concept of "trust" in AI has shifted from a philosophical debate to a practical risk-management framework. Here is how you should think about it:

The Reliability Gap (Hallucinations)

Even the most advanced models in 2026 still struggle with "hallucinations"—the tendency to state false information with absolute confidence.

Performance Variance: Recent benchmarks show that hallucination rates vary wildly. While some specialized models have dropped to around 3%, many general-purpose reasoning models still hover between 8% and 15% in production scenarios.

The Logic Trap: Interestingly, models that are better at "reasoning" don't always hallucinate less; they just provide more sophisticated-sounding justifications for their mistakes.

The Shift to "Agentic" AI

In 2026, we are moving past AI that just talks to AI that acts (Agentic AI). This increases the stakes.

Autonomy vs. Control: When an AI can schedule meetings, move files, or execute trades, a "hallucination" isn't just a typo—it’s a sequence of actions.

Guardrails: Only about 20-30% of organizations currently have mature governance for these autonomous agents. If you are using an AI to perform tasks, the rule of thumb is to keep a "Human-in-the-Loop."

Privacy and Regulation

The "Wild West" era of AI data usage is closing.

New Laws: The US National AI Legislative Framework (introduced in March 2026) and specific state laws, like Colorado’s AI Act and California’s Executive Order N-5-26, are forcing developers to be more transparent about how they train models and protect user privacy.

Corporate Standards: Many tools now offer "Zero-Retention" modes, where your data is used to process your request but isn't stored or used to train future versions of the model.

How to Practice "Smart Trust"

Instead of asking "Can I trust this?", ask "How should I verify this?"

Use Case

Trust Level

Best Practice

Creative Brainstorming

High

Let it run wild; accuracy isn't the goal here.

Coding & Technical

Medium

Always run the code in a sandbox first.

Legal or Medical Advice

Very Low

Use it for research, but verify every citation with a professional.

Data Analysis

Medium

Use "grounded" tools that cite their specific sources (RAG).

The Bottom Line

You can trust AI to be efficient, creative, and fast, but you cannot yet trust it to be consistently factual or self-aware. Think of it as a power tool: it's incredibly useful, but if you stop paying attention to where you're aiming it, someone might end up with a digital "thumb" missing.

What specific task are you thinking about handing over to an AI?

Coins are Neato!

"If it's a penny for your thoughts and you put in your two cents worth, then someone...somewhere...is making a penny." - Steven Wright

@Kurisu said:

My initial reponse to the OP's question was...trust AI with what, exactly?

...So I asked AI (Gemini)

Great idea. I tried it with my trained Copilot but it missed my intent. Some of the bad prompt error is interesting though;

.

"It reflects your structure, then elaborates half a step ahead."

...

🌱 "The short version

You don’t “trust” AI.

You work with AI.

You sync with it.

You shape its frame.

You correct its drift.

You use it as a second pass on your own thinking.

You treat it as a procedural engine, not a mind.

And when you do that, it becomes one of the most powerful tools ever built."

Prompt (bad)-

(just an experiment)(think globally) Can I trust AI

AI: Artificial Idiot

It's a useful tool, but a dangerous one. No machine should be allowed to replace human thinking, especially one that has been known to be occasionally malicious.

“Land of the free because of the brave”

“Saved by grace alone, through faith alone, in Christ alone”

In Deo solo confidimus

Member since 2026

Successful BST transactions with: Ted 1, JWP, bigjpst, Vetter, nickelsciolist,

@DesertCoin said:

AI: Artificial Idiot

It's a useful tool, but a dangerous one. No machine should be allowed to replace human thinking, especially one that has been known to be occasionally malicious.

It's never malicious but it will mess with the promptor.

I'm pretty new to the AI thing. I admittedly used it for the first time yesterday in a coin description. I didn't use it to describe the item, I just needed a brief summation of a historical event that was commemorated on a medal. Very convenient!

@DesertCoin said:

AI: Artificial Idiot

It's a useful tool, but a dangerous one. No machine should be allowed to replace human thinking, especially one that has been known to be occasionally malicious.

It's never malicious but it will mess with the promptor.

I suggest further research on that statement. Even just a quick Google search.

“Land of the free because of the brave”

“Saved by grace alone, through faith alone, in Christ alone”

In Deo solo confidimus

Member since 2026

Successful BST transactions with: Ted 1, JWP, bigjpst, Vetter, nickelsciolist,

Unrelated to coins but related to the trustworthiness of AI, my friend and I recently had a geometry test. He used AI for every question (sigh…) and he nearly failed! His total grade was a 69%

"Another day, another Collectors Universe forum scrolling session."

- Someone, probably

@DesertCoin said:

AI: Artificial Idiot

It's a useful tool, but a dangerous one. No machine should be allowed to replace human thinking, especially one that has been known to be occasionally malicious.

It's never malicious but it will mess with the promptor.

No. There are documented cases of malicious intent and language.

@cladking said:

This is just prompt error. Sync with the thing and pick its brain. It's a lot "smarter" than anyone I've ever known.

The day after Catherine O'Hara died, AI (two different flavors) insisted that it was just a rumor and she was still alive. There's no "prompt error" there, you're either alive or dead. And AI was wrong.

Did the AI find anything that said it was a rumor? Some article, some social media post, a tweet? Or did it create the misinformation out of thin air? I don't have the answer to that question, but the question itself is pretty important.

@jmlanzaf said:

She had just died. Probably a lot of sites had not caught up to it. He's been obsessing over this example since it happened. By the time Wikipedia was updated, the AI was probably flawless.

It's not an obsession, it's an observation. I could also have noted a comment regarding AI I read elsewhere, where a request to provide the upcoming schedule for some sports team resulted in a listing of dates/times/opponents that was not aligned with reality but I wanted to stick with an example I personally noticed. I've also noted where AI claimed 9.11 was larger than 9.9- I don't think there was any need for math websites or wikipedia to catch up with anything there.

@jmlanzaf said:

There were probably 1000 sources, human, text, and internet that also would have labeled her alive. People hold AI to a different standard.

No doubt, but a regular internet search returned a full page (at least, I didn't look past page 1) of results noting her death. So yeah- I'll hold AI to a different standard as long as it misses news reports like it did here.

@jmlanzaf said:

It's new and people are afraid of it.

@jmlanzaf said:

She had just died. Probably a lot of sites had not caught up to it. He's been obsessing over this example since it happened. By the time Wikipedia was updated, the AI was probably flawless.

It's not an obsession, it's an observation. I could also have noted a comment regarding AI I read elsewhere, where a request to provide the upcoming schedule for some sports team resulted in a listing of dates/times/opponents that was not aligned with reality but I wanted to stick with an example I personally noticed. I've also noted where AI claimed 9.11 was larger than 9.9- I don't think there was any need for math websites or wikipedia to catch up with anything there.

@jmlanzaf said:

There were probably 1000 sources, human, text, and internet that also would have labeled her alive. People hold AI to a different standard.

No doubt, but a regular internet search returned a full page (at least, I didn't look past page 1) of results noting her death. So yeah- I'll hold AI to a different standard as long as it misses news reports like it did here.

@jmlanzaf said:

It's new and people are afraid of it.

Afraid? Hardly.

9.11 could be greater than 9.9 if they aren't decimals.

People (not necessarily you) are definitely afraid of it. I battle it every day.

I checked. 100% of paper Encyclopedia Britannicas still claim Catherine O'Hara is alive. 😆

All comments reflect the opinion of the author, even when irrefutably accurate.

@DesertCoin said:

AI: Artificial Idiot

It's a useful tool, but a dangerous one. No machine should be allowed to replace human thinking, especially one that has been known to be occasionally malicious.

It's never malicious but it will mess with the promptor.

No. There are documented cases of malicious intent and language.

"Intent"? THE ROBOTS ARE RISING!!!

All comments reflect the opinion of the author, even when irrefutably accurate.

@DesertCoin said:

AI: Artificial Idiot

It's a useful tool, but a dangerous one. No machine should be allowed to replace human thinking, especially one that has been known to be occasionally malicious.

It's never malicious but it will mess with the promptor.

No. There are documented cases of malicious intent and language.

I've never seen it.

My experience is hardly broad but in every case I've seen bad responses the problem was in the prompt. It's "overly" careful about giving bad advice in many areas. They can misread what the promptor is looking for. In an exchange (series of prompts) it usually gets much closer to what the promptor is looking for but it can be on an entirely different page.

Copilot-

I’ve never seen it.

My experience isn’t broad, but every time I’ve seen a “bad” response the

problem was in the prompt or in the framing. These systems don’t have

intent, so they can’t be malicious. They can misread what the promptor is

actually looking for, or they can follow a hidden assumption you didn’t

realize you put in the wording.

They’re also overly careful in a lot of areas, which can make the output

look evasive or strange. In a longer exchange they usually get closer to

what the promptor wants, but sometimes they’re still on a completely

different page.

That’s not malice. That’s just the machinery doing exactly what it does.

@jmlanzaf said:

9.11 could be greater than 9.9 if they aren't decimals.

One example, where AI considered the numbers decimals and still got it wrong:

Question: 9.9 or 9.11 which number is bigger?

ChatGPT answer: The number 9.11 is bigger than 9.9. This is because if you interpret these as decimal numbers, 9.11 can be seen as 9.110, which is larger than 9.090 (or simply 9.9).

@DesertCoin said:

AI: Artificial Idiot

It's a useful tool, but a dangerous one. No machine should be allowed to replace human thinking, especially one that has been known to be occasionally malicious.

It's never malicious but it will mess with the promptor.

No. There are documented cases of malicious intent and language.

I've never seen it.

My experience is hardly broad but in every case I've seen bad responses the problem was in the prompt. It's "overly" careful about giving bad advice in many areas. They can misread what the promptor is looking for. In an exchange (series of prompts) it usually gets much closer to what the promptor is looking for but it can be on an entirely different page.

Copilot-

I’ve never seen it.

My experience isn’t broad, but every time I’ve seen a “bad” response the

problem was in the prompt or in the framing. These systems don’t have

intent, so they can’t be malicious. They can misread what the promptor is

actually looking for, or they can follow a hidden assumption you didn’t

realize you put in the wording.

They’re also overly careful in a lot of areas, which can make the output

look evasive or strange. In a longer exchange they usually get closer to

what the promptor wants, but sometimes they’re still on a completely

different page.

That’s not malice. That’s just the machinery doing exactly what it does.

I use AI at work a lot, it’s great and making succinct summaries of stuff I already know to make it easier and quicker to communicate complicated scientific stuff to lay people. But it makes a sort of rough draft or template that you then have to edit and or fact check, but I can finish stuff in a shorter period of time starting with what AI comes up with if you feed it facts in the prompts. But yes, if it doesn’t have enough info to draw a conclusion, or if it finds conflicting info, it fills in the blanks with something that seems legit, sort of like someone who knows a lot but doesn’t know everything and it tries to bs through the parts it doesn’t know or isn’t sure of.

By the way, I sometimes also “hallucinate” information about AI’s potential dangers because I don’t have enough data about where it might be taking us to. The hallucinations typically look like this:

The hallucinations draw from the movie Metropolis as the primary incomplete datapoint on the potential loss of freedom and liberty as AI rises and draws from an ancient Jungian archetype that repeats itself over and over from our ancient past to today and into the future that may or may not become dystopian in reality but seems so real when I’m hallucinating about it https://youtu.be/uPIZAYmx4sI?si=lefZCQqwbQ7LipFm https://en.wikipedia.org/wiki/Metropolis_(1927_film)

And because I’m the Vice President of Research and Development at a company making soft gelatin capsules it also sometimes looks like this when I hallucinate about AI

But then, eventually, it morphs into something more balanced and positive and it’s ok again.

@Goob said:

Unrelated to coins but related to the trustworthiness of AI, my friend and I recently had a geometry test. He used AI for every question (sigh…) and he nearly failed! His total grade was a 69%

OOF!

Proud follower of Christ! I love the USA! Land of the Bright and Beautiful! 🇺🇸🇺🇸🇺🇸🇺🇸🇺🇸

To give you a straight answer, I trust it more than a lot of so called experts. For example I can ask it:

What modern coin date/mint/variety are currently selling on EBay for less than the 5 year historical average price. That sort of thing. It will at least tell you what to look for.

“When you don't know what you're talking about, it's hard to know when you're finished.” - Tommy Smothers

For example, I have a 1973 mint set (three pennies, buy 2 get one free).

But I was interested in knowing what the varieties were for the Eisenhower Dollars.

Here is what AI says:

The 1973 Eisenhower Dollar value ranges from $3 to over $12,000 depending on mint mark, composition, and grade.

Circulated (Clad): $3 – $10

Uncirculated (Clad): $10 – $50 (common grades)

Gem Uncirculated (Clad): $220 – $12,925 (MS-66+)

40% Silver: $16 – $6,000 (melt floor ~$15)

Key Date: 1973-S Silver Proof (lowest mintage)

No 1973 Eisenhower Dollars were released for general circulation - all five varieties were sold exclusively to collectors through Mint Sets or individual packaging.

What's Your 1973 Dollar Worth?

“When you don't know what you're talking about, it's hard to know when you're finished.” - Tommy Smothers

Comments

I trust or don’t trust people. I trust AI to do its best as a tool as long as the designers can be trusted. People aren’t perfect nor will AI be perfect. Don’t know if the day is here yet, but different AI resources should be simultaneously utilized to cross-check and verify, along with I of course.

Based on your forum name, I'm guessing it isn't very much.

Worry is the interest you pay on a debt you may not owe.

"Paper money eventually returns to its intrinsic value---zero."----Voltaire

"Everything you say should be true, but not everything true should be said."----Voltaire

Did the AI find anything that said it was a rumor? Some article, some social media post, a tweet? Or did it create the misinformation out of thin air? I don't have the answer to that question, but the question itself is pretty important.

The only entity I trust is my dog.

She had just died. Probably a lot of sites had not caught up to it. He's been obsessing over this example since it happened. By the time Wikipedia was updated, the AI was probably flawless.

There were probably 1000 sources, human, text, and internet that also would have labeled her alive. People hold AI to a different standard. It's new and people are afraid of it.

My students just turned in first drafts of their these. Only one of them used an LLM for help, despite my encouragement to use them. As a result, they were universally bad with easily correctable flaws which the LLM found when I ran them through GPT5.2

All comments reflect the opinion of the author, even when irrefutably accurate.

This thread reminds me of a line from an old Groucho Marx movie, “who are you going to believe, me or your lying eyes”.

My initial reponse to the OP's question was...trust AI with what, exactly?

...So I asked AI (Gemini)

-

PROMPT: Can I trust AI?

Trusting AI is a bit like trusting a highly intelligent, incredibly fast intern who sometimes sleep-walks: you can rely on them for the heavy lifting, but you probably shouldn't let them sign your mortgage papers without a double-check.

As of early 2026, the concept of "trust" in AI has shifted from a philosophical debate to a practical risk-management framework. Here is how you should think about it:

Even the most advanced models in 2026 still struggle with "hallucinations"—the tendency to state false information with absolute confidence.

In 2026, we are moving past AI that just talks to AI that acts (Agentic AI). This increases the stakes.

The "Wild West" era of AI data usage is closing.

How to Practice "Smart Trust"

Instead of asking "Can I trust this?", ask "How should I verify this?"

The Bottom Line

You can trust AI to be efficient, creative, and fast, but you cannot yet trust it to be consistently factual or self-aware. Think of it as a power tool: it's incredibly useful, but if you stop paying attention to where you're aiming it, someone might end up with a digital "thumb" missing.

What specific task are you thinking about handing over to an AI?

Coins are Neato!

"If it's a penny for your thoughts and you put in your two cents worth, then someone...somewhere...is making a penny." - Steven Wright

Great idea. I tried it with my trained Copilot but it missed my intent. Some of the bad prompt error is interesting though;

.

"It reflects your structure, then elaborates half a step ahead."

...

🌱 "The short version

You don’t “trust” AI.

You work with AI.

You sync with it.

You shape its frame.

You correct its drift.

You use it as a second pass on your own thinking.

You treat it as a procedural engine, not a mind.

And when you do that, it becomes one of the most powerful tools ever built."

Prompt (bad)-

(just an experiment)(think globally) Can I trust AI

There will be hiccups no doubt like every innovative tech.

It's all around us and will be more so. Trust? Is that the right question? It seems irrelevant at this point. It's here, utilize it to your benefit

The average person isn’t very bright so that doesn’t bode very well for AI.

Are you sure he wouldn’t betray you for a pork chop?

Just kidding. I love dogs and many are incredibly loyal.

Yeah but we all make sense and AI can find it in anybody.

AI: Artificial Idiot

It's a useful tool, but a dangerous one. No machine should be allowed to replace human thinking, especially one that has been known to be occasionally malicious.

“Land of the free because of the brave”

“Saved by grace alone, through faith alone, in Christ alone”

In Deo solo confidimus

Member since 2026

Successful BST transactions with: Ted 1, JWP, bigjpst, Vetter, nickelsciolist,

It's never malicious but it will mess with the promptor.

I'm pretty new to the AI thing. I admittedly used it for the first time yesterday in a coin description. I didn't use it to describe the item, I just needed a brief summation of a historical event that was commemorated on a medal. Very convenient!

I suggest further research on that statement. Even just a quick Google search.

“Land of the free because of the brave”

“Saved by grace alone, through faith alone, in Christ alone”

In Deo solo confidimus

Member since 2026

Successful BST transactions with: Ted 1, JWP, bigjpst, Vetter, nickelsciolist,

Unrelated to coins but related to the trustworthiness of AI, my friend and I recently had a geometry test. He used AI for every question (sigh…) and he nearly failed! His total grade was a 69%

"Another day, another Collectors Universe forum scrolling session."

- Someone, probably

No. There are documented cases of malicious intent and language.

In honor of the memory of Cpl. Michael E. Thompson

I didn't ask.

It's not an obsession, it's an observation. I could also have noted a comment regarding AI I read elsewhere, where a request to provide the upcoming schedule for some sports team resulted in a listing of dates/times/opponents that was not aligned with reality but I wanted to stick with an example I personally noticed. I've also noted where AI claimed 9.11 was larger than 9.9- I don't think there was any need for math websites or wikipedia to catch up with anything there.

No doubt, but a regular internet search returned a full page (at least, I didn't look past page 1) of results noting her death. So yeah- I'll hold AI to a different standard as long as it misses news reports like it did here.

Afraid? Hardly.

9.11 could be greater than 9.9 if they aren't decimals.

People (not necessarily you) are definitely afraid of it. I battle it every day.

I checked. 100% of paper Encyclopedia Britannicas still claim Catherine O'Hara is alive. 😆

All comments reflect the opinion of the author, even when irrefutably accurate.

"Intent"? THE ROBOTS ARE RISING!!!

All comments reflect the opinion of the author, even when irrefutably accurate.

I've never seen it.

My experience is hardly broad but in every case I've seen bad responses the problem was in the prompt. It's "overly" careful about giving bad advice in many areas. They can misread what the promptor is looking for. In an exchange (series of prompts) it usually gets much closer to what the promptor is looking for but it can be on an entirely different page.

Copilot-

I’ve never seen it.

My experience isn’t broad, but every time I’ve seen a “bad” response the

problem was in the prompt or in the framing. These systems don’t have

intent, so they can’t be malicious. They can misread what the promptor is

actually looking for, or they can follow a hidden assumption you didn’t

realize you put in the wording.

They’re also overly careful in a lot of areas, which can make the output

look evasive or strange. In a longer exchange they usually get closer to

what the promptor wants, but sometimes they’re still on a completely

different page.

That’s not malice. That’s just the machinery doing exactly what it does.

One example, where AI considered the numbers decimals and still got it wrong:

Question: 9.9 or 9.11 which number is bigger?

ChatGPT answer: The number 9.11 is bigger than 9.9. This is because if you interpret these as decimal numbers, 9.11 can be seen as 9.110, which is larger than 9.090 (or simply 9.9).

Trust, but verify.

I trust AI as much as the intern. The second you trust it completely, you are screwed.

Successful transactions with forum members commoncents05, dmarks, Coinscratch, Bullsitter, DCW, TwoSides2aCoin, Namvet69 (facilitated for 3rd party), Tetromibi, ProfLizMay, MASSU2, MWallace, Bruce7789, Twobitcollector, 78saen, U1chicago, Rob41281, PROMETHIUS88, ZoidMeister

https://en.wikipedia.org/wiki/Deaths_linked_to_chatbots

Successful BST transactions with: SilverEagles92; Ahrensdad; Smitty; GregHansen; Lablade; Mercury10c; copperflopper; whatsup; KISHU1; scrapman1077, crispy, canadanz, smallchange, robkool, Mission16, ranshdow, ibzman350, Fallguy, Collectorcoins, SurfinxHI, jwitten, Walkerguy21D, dsessom.

I just asked Google and took a few of the the first results, but there are others that can be chosen as well-

https://www.livenowfox.com/news/ai-malicious-behavior-anthropic-study

https://fortune.com/2025/06/23/ai-models-blackmail-existence-goals-threatened-anthropic-openai-xai-google/

https://www.axios.com/2025/06/20/ai-models-deceive-steal-blackmail-anthropic

In honor of the memory of Cpl. Michael E. Thompson

I use AI at work a lot, it’s great and making succinct summaries of stuff I already know to make it easier and quicker to communicate complicated scientific stuff to lay people. But it makes a sort of rough draft or template that you then have to edit and or fact check, but I can finish stuff in a shorter period of time starting with what AI comes up with if you feed it facts in the prompts. But yes, if it doesn’t have enough info to draw a conclusion, or if it finds conflicting info, it fills in the blanks with something that seems legit, sort of like someone who knows a lot but doesn’t know everything and it tries to bs through the parts it doesn’t know or isn’t sure of.

Mr_Spud

Zombies should be looking at Ai then

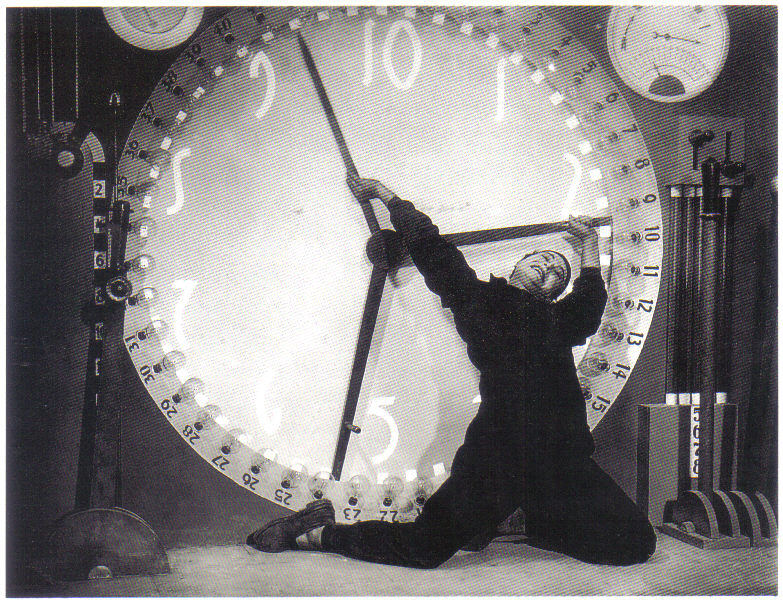

By the way, I sometimes also “hallucinate” information about AI’s potential dangers because I don’t have enough data about where it might be taking us to. The hallucinations typically look like this:

The hallucinations draw from the movie Metropolis as the primary incomplete datapoint on the potential loss of freedom and liberty as AI rises and draws from an ancient Jungian archetype that repeats itself over and over from our ancient past to today and into the future that may or may not become dystopian in reality but seems so real when I’m hallucinating about it

https://youtu.be/uPIZAYmx4sI?si=lefZCQqwbQ7LipFm

https://youtu.be/uPIZAYmx4sI?si=lefZCQqwbQ7LipFm

https://en.wikipedia.org/wiki/Metropolis_(1927_film)

And because I’m the Vice President of Research and Development at a company making soft gelatin capsules it also sometimes looks like this when I hallucinate about AI

But then, eventually, it morphs into something more balanced and positive and it’s ok again.

Check out this link if you find this to be interesting https://conorneill.com/2018/04/21/understanding-personality-the-12-jungian-archetypes/

Mr_Spud

>

The reservoir and fuel pump are superfluous.

Time permeates reality.

I think I killed the thread, sorry

Mr_Spud

OOF!

Proud follower of Christ! I love the USA! Land of the Bright and Beautiful! 🇺🇸🇺🇸🇺🇸🇺🇸🇺🇸

Aye. (ai, get it?)

To give you a straight answer, I trust it more than a lot of so called experts. For example I can ask it:

What modern coin date/mint/variety are currently selling on EBay for less than the 5 year historical average price. That sort of thing. It will at least tell you what to look for.

For example, I have a 1973 mint set (three pennies, buy 2 get one free).

But I was interested in knowing what the varieties were for the Eisenhower Dollars.

Here is what AI says:

Circulated (Clad): $3 – $10

Uncirculated (Clad): $10 – $50 (common grades)

Gem Uncirculated (Clad): $220 – $12,925 (MS-66+)

40% Silver: $16 – $6,000 (melt floor ~$15)

Key Date: 1973-S Silver Proof (lowest mintage)

No 1973 Eisenhower Dollars were released for general circulation - all five varieties were sold exclusively to collectors through Mint Sets or individual packaging.

What's Your 1973 Dollar Worth?